On Kaggle, you can win by blending the machine-learning algorithms with the best raw performance metrics. In real life, this approach rarely works.

A common mistake of inexperienced data scientists is to believe that machine learning is the only science they need to build successful predictive systems.

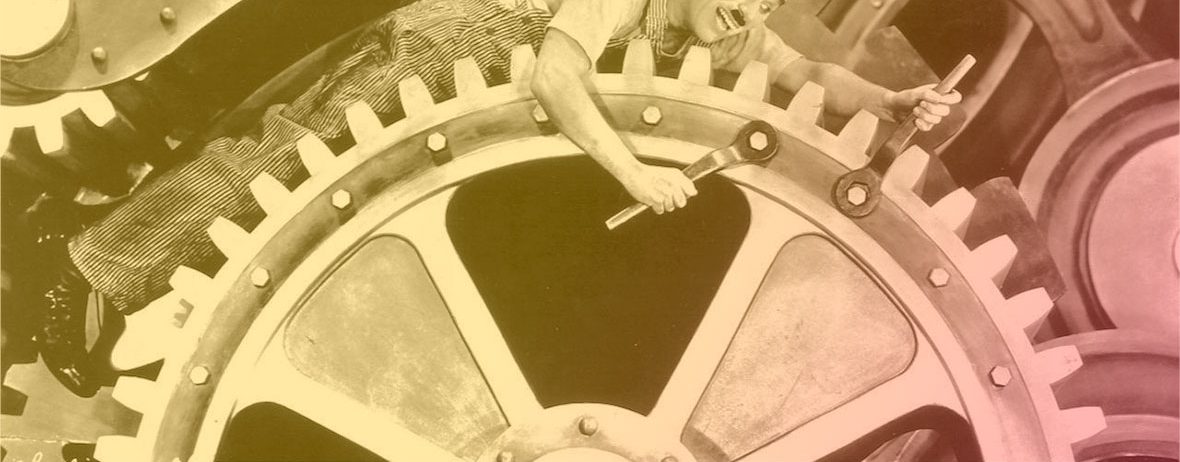

Machine-learning textbooks usually focus on individual algorithms that work in isolation. When you assemble multiple algorithms into a complex system, when this complex system is fed real-word data and interacts with real-world users, a lot of unexpected stuff will happen.

In real-life, systemic stability is what separates success from failure. It isn’t determined by the individual algorithms but by the overall architecture. Achieving systemic stability requires a control-theory mindset at all stages of the design process.

Here are 5 practical guidelines that summarize the design philosophy that we’ve successfully used to achieve systemic stability at Tinyclues.

1. Build an architecture that survives noise

Machine-learning algorithms optimize loss functions under assumptions on noise (resulting into an extra regularization term). Gaussian noise is a popular noise model in machine-learning theorems, not because it happens in real life, but because it makes the math easier.

The actual noise that you can encounter is unlikely to fit any simple model:

- The original noise from the actual problem that you are studying may be intrinsically hard to model. Eg, the B2C consumptions patterns that we are working on exhibit a customer attributes noise component (more or less Gaussian), a time-series noise component (extremely sparse and hard to model beyond the obvious cycles), plus some ticket-size noise (totally different patterns). Thus, the noise for the resulting revenue turns out to be impossible to reliably model.

- Noise from the data collection process. Data connections will never be 100% reliable and your system will have to continue to function reasonably well under your typical data collection noise patterns. Examples of data collection noise patterns we encounter so frequently that they influenced our predictive strategy: duplicate events, missing events for certain dates (recovered later, or never recovered), aberrations on all numerical values (such as prices), encoding inconsistencies.

- Noise artefacts from earlier algorithms in the processing pipeline. When you combine multiple algorithms, you multiply noise. If you’re not careful, you can end up with big problems.

Do not assume that you can model noise. Do not assume that you can get rid of noise. Noise happens. Your architecture must survive noise.

This is why you need to have noise reduction in mind at all steps of the design process. In practice: whenever you combine algorithm X with algorithm Y (eg, a feature engineering algorithm with a scoring algorithm), make sure that all possible artefacts of algorithm X are damped and not amplified by algorithm Y.

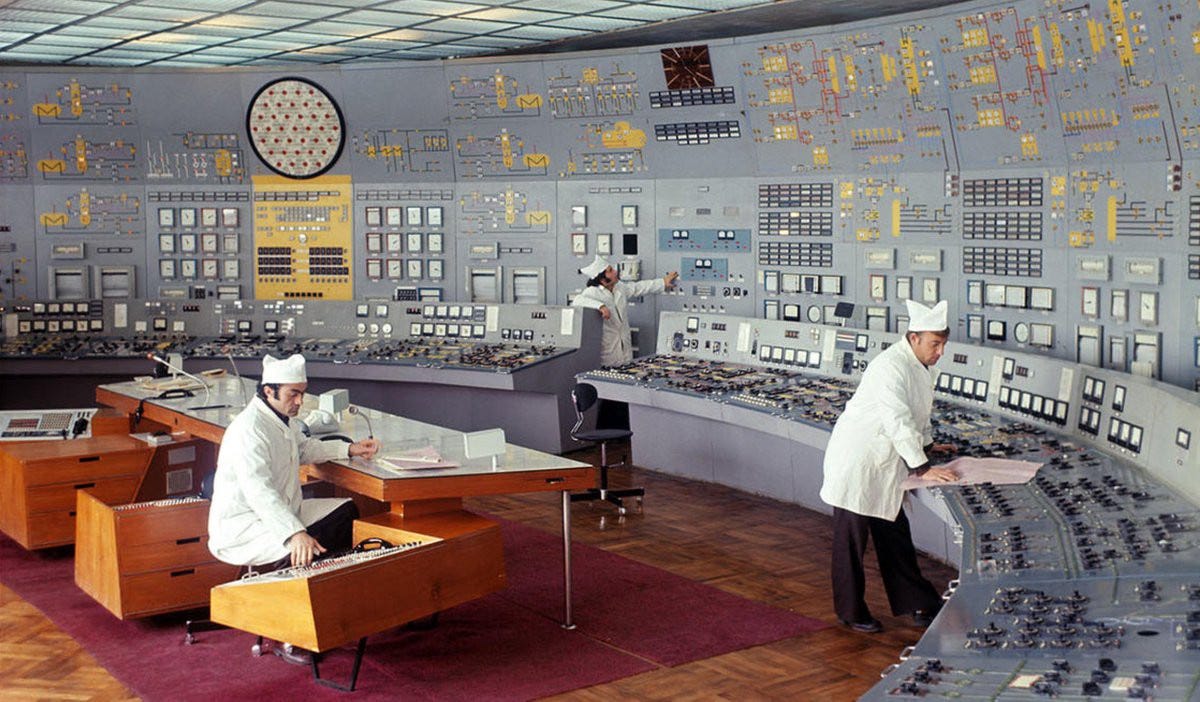

2. Design indestructible controllers

A controller is a piece of software that handles the final decision-making downstream of the core predictive engine.

Compared to fancy machine-learning algorithms, controllers look simple and it is easy to overlook them. However, they are the most critical components for systemic stability. So, never overlook controllers! Consider them as external regularizers to your learning problem.

The campaign dispatch problem provides a great example. A key feature of Tinyclues is the possibility for marketing teams to optimize customer marketing plans where each customer is eligible to multiple campaigns but there is a cap on how many campaigns he can receive per week.

Let’s look at the simplified problem with two campaigns A and B and max_campaigns_per_user=1.

Upstream of the controller, your core predictive engine generates well-calibrated scores: for each user in the customer database, you have good predictions of the expected revenues if the user receives campaign A or campaign B.

Here the controller is the algorithm that consumes these scores and splits the database into customers receiving A and customers receiving B.

In the early days of Tinyclues, the first controller we tried was Argmax: each customer receives the campaign where his score is maximal. Argmax is the logical mathematical solution to the problem but, in the real world, it is an absolute disaster. Here is why:

- First, Argmax amplifies all the flaws from the scoring algorithm. It greedily allocates customers to wherever their score overfits the most.

- Even if there was zero overfit, Argmax would still exhibit unacceptable macro behaviors. Scores between different campaigns tend to be highly correlated: some customers are just better customers, whatever the campaign. If the ROC curve for campaign A is bit sharper than the ROC curve for campaign B, then all the best customers will be allocated to A, which results in really bad outcomes (for customers and for the marketing team).

A typical workaround consists in the score randomization via Thompson sampling. That may work under the hypothesis of simple noise model and would result into explore/exploit extra parameter for this controller (yet it did not provide a suitable macro behaviour in our case).

Looking more carefully at the situation, we realized that scores really have two components: an intensity component (how likely a customer will buy any product) and a specificity component (assuming a customer will buy a product, how likely will it be a product associated with campaign A?).

While it is tricky to reliably separate the two components, knowing that they exist helped us design an incredibly robust dispatch controller.

Rather than naive numerical optimization, our source of inspiration was combinatorial thinking taking into account business constraints and marketing savviness. This approach resulted in a dispatch algorithm that displays healthy behaviors even in the presence of overfit or scoring calibration issues.

3. Monitor macro behaviors, not just loss functions

The dispatch problem also illustrates a typical control issue that arises if you blindly trust machine-learning algorithms: loss functions that are defined as sums of local penalties fail to penalize unhealthy macro behaviors.

You may end up in situations where the optimization is OK at a local level (eg, each customer receives content that is reasonably relevant) but catastrophic at a macro level (eg, there are massive imbalances in the allocation of campaigns, or customers are locked in a content bubble.)

The real-world problem that you are solving cannot be modelled by a technical loss function.

Monitor the macro behavior of your system with high level metrics that are pragmatic and business-centric.

The high-level metrics of self-driving cars (miles between disengagements, accident rates, dispatch reliability) are different from the loss functions used by their machine-learning algorithms.

At Tinyclues, we closely monitor the macro behavior of our clients’ marketing programs across multiple months, with high-level metrics that capture audience overlaps between campaigns and customer-exposure rates for different engagement levels.

Building the right set of metrics is business-specific and solution-specific. It requires a deep understanding of the real-world problem that you are solving and the failure modes of your system. And if you’re lucky enough, you may find a way to integrate those metrics into your learning losses.

Building these metrics takes years and constitutes a form of data network effect.

4. Beware hyperparameter creep

Exposing too many hyperparameters can be a symptom that a predictive system isn’t ready for production. If you can’t figure out decent default values for your core hyperparameters, or if you expect to have to constantly tweak them in production, then your system is immature and probably unstable.

Machine learning is about machines that learn. If you have too many hyperparameters, if they change too often, then you’re adding an unpenalized and uncontrollable human-learning metalayer.

This is better understood in organizational terms. At Tinyclues, we have two Data Science teams:

- the R&D Data Science team, which designs the predictive stack;

- the Data Ops team, responsible for client setups and predictive quality in production.

The Data Ops team has serious machine-learning skills but their job isn’t to second-guess our predictive engine. They have access to a complete admin back-office but the core predictive hyperparameters have very clear default values.

The very few parameters that are modified on a client-by-client basis are all related to client-specific business goals. A typical example is the parameter that controls the “intensity-vs-specificity” trade-off (favoring short-term revenue by overexposing the most active customers vs re-engaging less active customers.)

But the job of the Data Ops team isn’t to adjust gradient descent rates, penalization coefficients or dimensions of latent feature layers. The job of the R&D Data Science team is to design a system that is 100% controllable with business-centric inputs only.

5. Always fail on the right side

Let’s face it: horrible things will happen. Someone will push a predictive bug in production. Weird anomalies in incoming data will mess up with key components of your stack. Users will do crazy things that you never expected.

Of course you need to build the QA and the instrumentation layer that will allow you to catch these incidents early on. But the best way to prepare is to build each component with the worst-case-scenario in mind.

Failure modes are hugely asymmetric in terms of business outcomes. A confused self-driving car should rather pull over rather than randomly accelerate. But on the left-lane of a highway it shouldn’t brutally decide to park.

Based on your domain-specific knowledge, identify the preferred failure mode for each component of your stack and design your controllers with these failure modes in mind.

All systems fail.

Antifragile systems fail on the right side.

Suggested further reading

- On regularizing outputs of recommendation systems based on business goals: https://dl.acm.org/doi/10.1145/3240323.3240372

- On Combinatorial Multi-Armed Bandit: http://proceedings.mlr.press/v28/chen13a.pdf

- On uncertainty estimation in Deep Learning: http://papers.nips.cc/paper/7219-simple-and-scalable-predictive-uncertainty-estimation-using-deep-ensembles.pdf

- Thompson sampling methods comparison: https://arxiv.org/pdf/1802.09127.pdf

- Bayesian Models for Click-Through Rate Prediction: https://quinonero.net/Publications/AdPredictorICML2010-final.pdf